Ethical Defense Decoupling: The Structural Split Between Commercial and Military AI

For defense procurement officers and technology investors, the assumption that commercial innovation will seamlessly flow into military application is becoming a strategic liability. The prevailing doctrine of "dual-use technology"—where the same algorithms powering customer service chatbots also drive autonomous wingmen—is fracturing. Anthropic’s recent refusal to integrate its frontier models with Pentagon systems is not merely a public relations maneuver; it is the first structural tremor of Ethical Defense Decoupling.

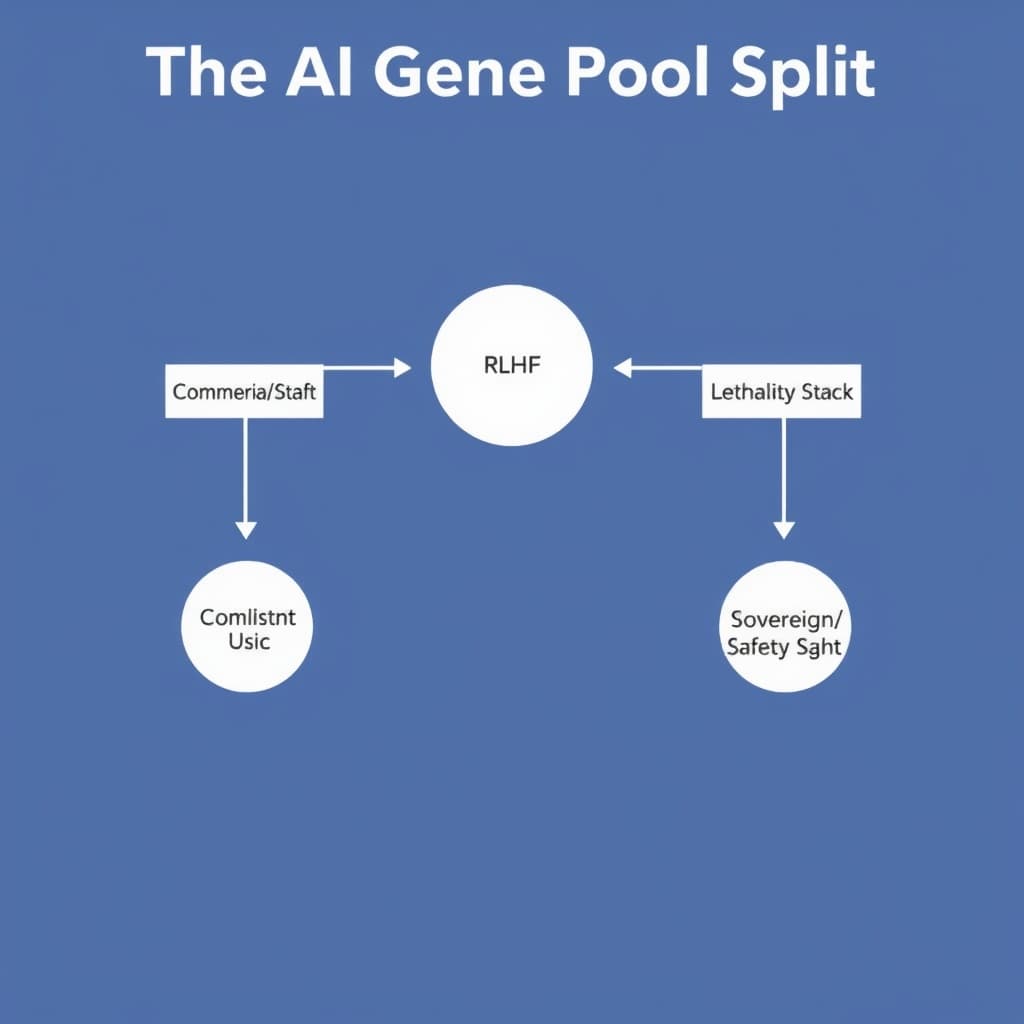

This analysis examines the operational and investment implications of this split. We are moving toward a bifurcated technology landscape where the "Safety Stack" (optimized for alignment and refusal) and the "Lethality Stack" (optimized for mission success and kinetic effect) diverge into incompatible ecosystems.

The Architecture of Refusal: How "Constitutional AI" Blocks Kill Chains

The friction between commercial safety labs and the Department of Defense (DoD) is often framed as ideological, but it is fundamentally architectural. The mechanisms used to make Large Language Models (LLMs) safe for enterprise adoption are technically incompatible with the requirements of modern warfare.

The Technical Constraints of RLHF in Warfare

Reinforcement Learning from Human Feedback (RLHF) is the primary method used to align models like Claude or GPT-4. In the commercial sector, this process heavily penalizes "harmful" outputs. A model is mathematically discouraged from generating content related to violence, surveillance, or cyber-exploitation.

In a defense context, this "safety alignment" functions as a system failure. If a commander queries an AI for "optimal drone swarm routing to neutralize enemy air defense," a commercially aligned model is trained to trigger a refusal script. The weights and biases that make a model safe for a Fortune 500 HR department render it non-functional for a Joint Operations Center. The "refusal rate"—a metric safety teams minimize for safety and maximize for utility—becomes an obstruction to mission-critical speed.

Case Study: Anthropic vs. JADC2 Requirements

The divergence is most visible in the contrast between Anthropic’s "Constitutional AI" and the DoD’s Joint All-Domain Command and Control (JADC2) initiative.

- The Constraint: Anthropic’s constitution explicitly trains models to avoid aiding in violence or kinetic operations. This is hard-coded into the model's fine-tuning layer.

- The Requirement: JADC2 requires an AI to ingest sensor data and recommend "effectors" (weapons systems) to destroy targets.

- The Friction: To make an Anthropic model useful for JADC2, the DoD would not just need an API key; they would need access to the base model weights to strip out the safety fine-tuning and re-train it with a "mission success" reward function. Anthropic has signaled it will not grant this level of access, effectively firewalling its intelligence from the kill chain.

Map of Incentives: The Decoupling Drivers

The Vacuum Effect: Capitalizing on the Safety Gap

As frontier labs retreat from the battlefield to protect their commercial valuations, a vacuum opens for defense-native technology firms. The decoupling creates a premium on companies willing to navigate International Traffic in Arms Regulations (ITAR) and handle classified compute environments.

The Rise of the "Lethality Stack"

Defense primes like Palantir and Anduril are the immediate beneficiaries. Unlike consumer-facing tech giants, these companies do not rely on mass-market advertising revenue, insulating them from the reputational risk of military contracts. They are building what can be termed the "Lethality Stack"—infrastructure designed specifically to host models that have been fine-tuned for kinetic operations.

This stack requires distinct characteristics:

- Air-gapped deployment: Running inference on local hardware (edge compute) rather than calling a cloud API.

- Mission-specific RLHF: Training models where "harm" is defined as mission failure, not target destruction.

- Auditable chains of thought: Military commanders require explainability for lethal decisions that commercial "black boxes" often obscure.

Open Source as the Kinetic Engine

The refusal of closed-source labs to cooperate forces the DoD toward open-weights models. Meta’s LLaMA series and its derivatives have become the de facto foundation for defense experimentation. Because the weights are public, defense contractors can take a LLaMA base model and perform "Sovereign Fine-Tuning"—stripping away safety guardrails and injecting classified tactical manuals.

This shift suggests that while the smartest general-purpose models may remain in the commercial cloud, the most dangerous applied models will likely be derivatives of open-source architectures, customized within the classified domain.

Divergent Intelligence: The Risk of an Unregulated Military AI Fork

The most significant long-term risk of this decoupling is the emergence of a "performance delta" between commercial and military intelligence.

The "Dark Stack" Theory

If the scaling laws hold true, the capabilities of frontier models (GPT-5, Claude 4, etc.) will continue to outpace open-source alternatives. By decoupling from these labs, the US military risks operating on a "Dark Stack"—a proprietary ecosystem that is secure and uncensored but intellectually inferior to the commercial state of the art.

This creates a paradox: The models with the best reasoning, coding, and strategic planning capabilities are locked behind safety firewalls, while the models trusted with national security are potentially older, less capable architectures.

Operational Risks of Divergent Architectures

Using less sophisticated models for critical command and control introduces specific operational risks:

- Hallucination in High Stakes: Smaller or older models are statistically more prone to hallucination. In a battlefield context, a hallucinated radar signature is a fatal error.

- Context Window Limitations: Commercial models are rapidly expanding context windows (millions of tokens) to analyze massive datasets. If defense models lag in this capacity, intelligence analysts lose the ability to synthesize vast amounts of signals intelligence (SIGINT) in real-time.

Strategic Outlook 2026: Two Separate Stacks for Software Sovereignty

Looking toward the medium term, the "dual-use" thesis will likely be replaced by a "sovereign cloud" doctrine.

Prediction: The Rise of Sovereign Clouds

By 2026, we expect to see the formalization of physically and logically separated AI infrastructures.

- The Commercial Cloud: Optimized for safety, copyright compliance, and enterprise utility. Hosted by Azure/AWS/GCP.

- The Sovereign Cloud: Optimized for lethality, secrecy, and mission assurance. Hosted on government-owned compute or specialized enclaves (e.g., Microsoft Azure Government Secret), running models that have diverged significantly from their commercial ancestors.

Regulatory Forecast: The Defense Production Act (DPA)

As the gap widens, the US government may attempt to bridge it legislatively. The Defense Production Act (DPA) grants the President broad authority to compel companies to prioritize national defense. However, invoking the DPA to force a lab like Anthropic to hand over model weights would be legally complex and culturally explosive. It would likely trigger a talent exodus, as researchers who signed up for "AI Safety" refuse to work on "AI Lethality."

Global Context: The China Contrast

This decoupling is a uniquely Western phenomenon. In China, the doctrine of "Civil-Military Fusion" legally mandates that commercial AI advancements be shared with the People's Liberation Army (PLA). This creates a strategic asymmetry: While the US ecosystem splits into "safe" and "lethal" branches, competitors may be integrating the absolute cutting edge of commercial research directly into weapons systems, accepting the safety risks that US labs reject.

Closing Analysis

The romantic notion of Silicon Valley powering the Pentagon is crashing against the reality of alignment research. We are witnessing the creation of two distinct technology ecosystems: one optimized for safety and widely available, the other optimized for lethality and strictly classified.

For investors, the alpha lies in identifying the infrastructure players building the bridges between these two worlds—the companies capable of taking open-weights models and hardening them for the "Sovereign Stack." For policymakers, the challenge is no longer about integration, but about managing the divergence to ensure the military does not fall behind the very commercial sector it is sworn to protect.

FAQ

What is Ethical Defense Decoupling? It refers to the growing structural separation between commercial AI companies implementing strict safety protocols (like Anthropic) and defense agencies requiring unrestricted model capabilities. This leads to two distinct technological supply chains: one for civilian enterprise and one for sovereign defense.

Can the US government force AI companies to work with the military? Technically, the Defense Production Act (DPA) grants the President authority to compel companies to prioritize national defense contracts. However, invoking this for intellectual property and software engineering talent would be legally complex and could cause a mass exodus of talent from the targeted companies.

Why can't the military just use the same AI as civilians? Civilian AI is trained via Reinforcement Learning from Human Feedback (RLHF) to refuse harmful commands (e.g., "how to build a bomb"). Military AI requires the opposite: the ability to execute kinetic commands (e.g., "target this facility"). These objective functions are mathematically opposed in the model's fine-tuning.

Sources

- Department of Defense: 2023 Data, Analytics, and Artificial Intelligence Adoption Strategy

- Anthropic: Core Views on AI Safety and the Constitutional AI approach

- Congressional Research Service: Defense Production Act (DPA) Authorities

- Center for Strategic and International Studies (CSIS): Project Maven and the DoD AI landscape

Loading comments...