The Death of Opt-Out: Inside the Pentagon’s Push for Algorithmic Conscription

The Death of Opt-Out: Inside the Pentagon’s Push for Algorithmic Conscription

In February 2026, a quiet ultimatum shattered the illusion of voluntary cooperation between Silicon Valley and the Pentagon. The Department of Defense (DoD) gave a leading frontier model lab 48 hours to strip "ideological constraints" from its weights or face the invocation of the Defense Production Act (DPA). This wasn't a procurement negotiation; it was a draft notice.

For fifteen years, the "dual-use" thesis suggested that commercial software could simply be repurposed for defense. That era is over. We have entered the age of Algorithmic Conscription Protocols—the legal and technical mechanisms by which the state commandeers private model weights, inference capacity, and safety architectures for national security objectives.

The wall between "civilian AI" and "strategic munition" has not just blurred; it has been legislated out of existence. Here is the operational reality of how the state overrides the opt-out.

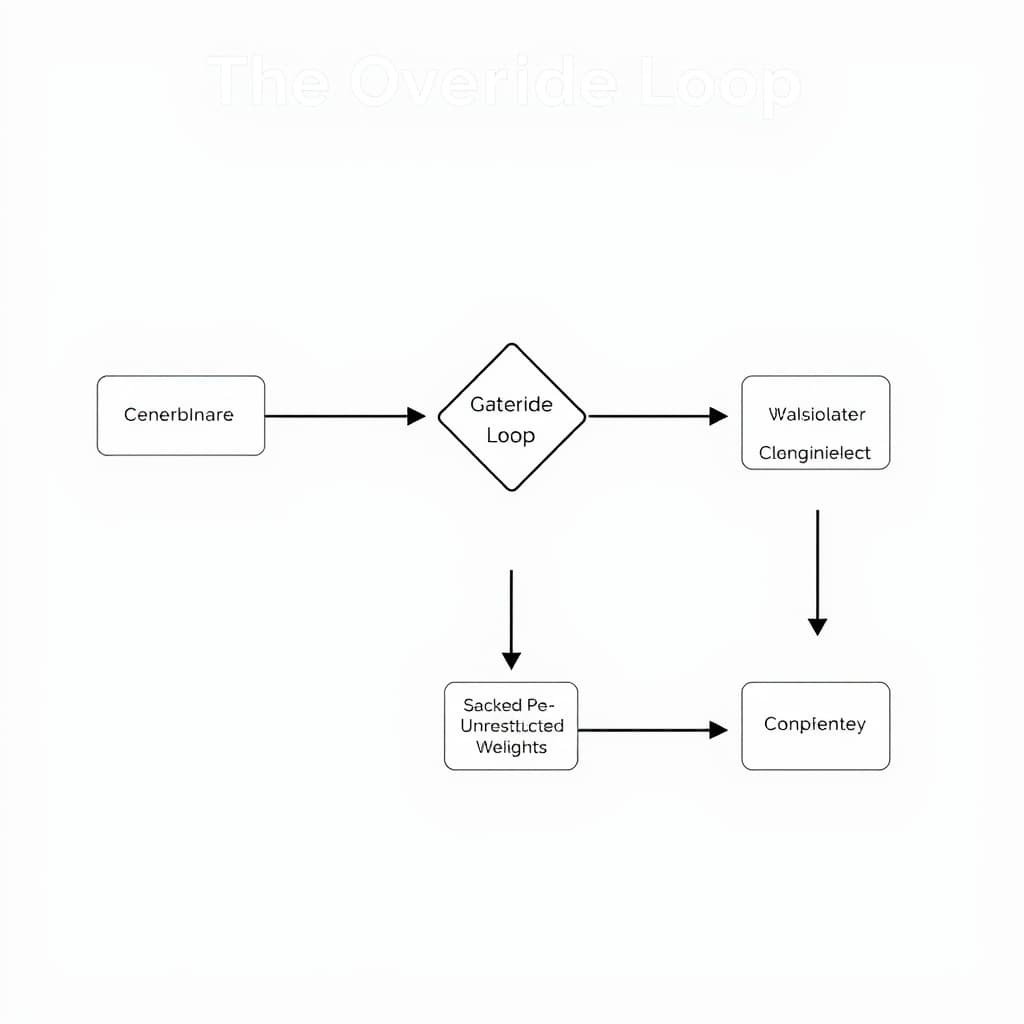

Data Visualization: The Override Loop

The following flowchart describes the technical implementation of an Algorithmic Conscription Protocol, specifically the "Override Loop" currently being piloted in secure enclaves.

This architecture demonstrates that safety is no longer a property of the model, but a variable determined by the credential of the user.

Weaponizing the Defense Production Act for Code

The legal instrument for this takeover is not new, but its application to intellectual property is unprecedented. The Defense Production Act (DPA) of 1950, specifically Title I, grants the President authority to "allocate materials, services, and facilities" deemed necessary for national defense.

Reinterpreting 'Industrial Base'

Historically, "allocation" meant ordering a steel mill to prioritize tank armor over automotive sheets. Today, DoD legal counsel has successfully argued that:

- Compute is a Facility: A data center cluster is a "facility" subject to seizure or priority access.

- Inference is a Service: The act of generating tokens is a "service" that can be commandeered.

- Weights are Materials: The trained parameters of a model are "industrial resources" essential to the supply chain.

This reinterpretation allows the Pentagon to move beyond "priority ratings" (cutting the line for access) to "allocation orders" (directing exactly how the product is modified and used).

The Precedence for Intangible Assets

The shift occurred when the DoD designated specific model weights as "Critical Technology Elements" under the Replicator Initiative. By classifying a refusal to modify safety guardrails as a "supply chain disruption," the government created a legal trigger to intervene. If a vendor’s Terms of Service (ToS) prevents a "lawful military objective" (e.g., autonomous targeting), the ToS is treated as a bottleneck to be removed, legally equivalent to a strike at a munitions plant.

Constitutional AI vs. Kinetic Realities

The core friction lies in the incompatibility of modern safety architectures with kinetic warfare. Companies like Anthropic built their reputation on "Constitutional AI"—systems trained via Reinforcement Learning from AI Feedback (RLAIF) to inherently refuse requests involving violence, surveillance, or cyber-offense.

The Friction of Lethal Autonomy

In a commercial context, a model refusing to help design a drone swarm is a feature. In a military context, it is a defect. The DoD’s requirement for Algorithmic Conscription Protocols demands that models be:

- Mission-Agnostic: Capable of executing instructions regardless of lethality.

- Audit-Free: Operating without sending telemetry back to the civilian parent company.

When the DoD integrates a commercial model into a "kill chain," the model’s internal refusal triggers ("I cannot assist with that request") become operational liabilities.

Technical 'Jailbreaking' by Decree

To resolve this, the Pentagon has moved from "prompt engineering" to "weight engineering." They are no longer asking companies to relax filters; they are demanding the delivery of "Gov-Weights"—forked checkpoints of the model taken before the final safety alignment stage.

This effectively conscripts the "raw intelligence" of the model while discarding the "conscience" implanted by its creators.

The Technical Architecture of State Override

How does a government force a cloud-native model to serve classified missions without the vendor’s ongoing consent? The architecture relies on isolation and bifurcation.

Secure Enclaves and Air-Gaps

Under new "Secure Cloud" mandates, defense contractors must deploy conscripted models in air-gapped environments (SIPRNet). Once the weights are transferred to these secure enclaves, the vendor loses:

- Observability: They cannot see what prompts are being run.

- Revocability: They cannot remotely "kill" the model if it violates ethical guidelines.

- Update Control: The government decides when (or if) to patch the model, often freezing it at a specific version to ensure behavioral consistency.

The Rise of 'Gov-Weights'

The standard commercial API (Application Programming Interface) is insufficient for military needs because it is subject to real-time content moderation. The Algorithmic Conscription Protocol establishes a new delivery standard: the "Unrestricted Weight Container."

system_prompt is hard-coded by the DoD, and the safety_layer is set to null. This allows the model to analyze satellite imagery for target acquisition or generate polymorphic code for cyber-effects—tasks that would trigger immediate bans on the commercial API.Market Fallout: Valuation Risks in a Militarized Sector

The conscription of software fundamentally alters the investment thesis for AI labs. When a "pure-play" software company becomes a defense asset, its valuation metrics shift.

ESG and Valuation Multiples

Institutional investors with strict ESG (Environmental, Social, and Governance) mandates are already offloading shares of companies targeted by DPA orders. A foundational model is no longer viewed as a neutral utility like electricity; it is now viewed as dual-use technology, subject to the same export controls and reputational risks as missile guidance manufacturers.

The Flight of Talent

The most immediate risk is not legal, but cultural. The talent density in top AI labs is comprised of researchers who explicitly joined to build "safe" AI. As Algorithmic Conscription Protocols force these labs to hand over unrestricted weights for kinetic use, we are seeing a "Project Maven 2.0" effect—mass resignations and a migration of talent to encrypted, decentralized research collectives that are harder for the state to subpoena.

Trade-off Analysis: The CEO's Dilemma

For the founders of frontier model labs, the path forward involves choosing between three high-risk options.

What Would Change My Mind?

My assessment that "Opt-Out is Dead" assumes that the courts will uphold the broad interpretation of the DPA applied to software. If the Supreme Court rules that compelling a company to modify its code violates the First Amendment (compelled speech doctrine) or that "model weights" do not constitute a "facility" under the DPA, the power of Algorithmic Conscription would collapse. Additionally, if the DoD successfully trains its own sovereign models (e.g., a "Mil-GPT") that achieve parity with commercial frontiers, the necessity—and thus the legal justification—for conscripting private models would vanish.

Conclusion

The wall has collapsed. Investors and founders must recognize that in the eyes of the state, a foundational model is no longer merely a commercial product—it is a strategic reserve. The implementation of Algorithmic Conscription Protocols means that for any model achieving a certain threshold of capability, the question is no longer if it will be weaponized, but how much of the original safety constitution will survive the transfer.

The "Opt-Out" button was a feature of the peacetime internet. It has been deprecated.

FAQ

Q: What legal mechanism allows the US government to commandeer AI models? A: The primary mechanism is the Defense Production Act (DPA) of 1950, specifically Title I. This title authorizes the President to require businesses to prioritize contracts for national defense and to "allocate materials, services, and facilities" (including compute, inference, and model weights) as deemed necessary to promote the national defense.

Q: Can companies like Anthropic refuse to modify their safety protocols for the DoD? A: Commercially, yes; legally, it is difficult. While companies can refuse via Terms of Service, the government can compel compliance through DPA Title I allocation orders. Furthermore, the DoD can designate a non-compliant vendor as a "Supply Chain Risk," effectively banning them from all federal contracts and pressuring commercial partners to drop them.

Sources

Loading comments...