Courts Overrule the Pentagon: The Dawn of Judicial AI-Risk Interdiction

Courts Overrule the Pentagon: The Dawn of Judicial AI-Risk Interdiction

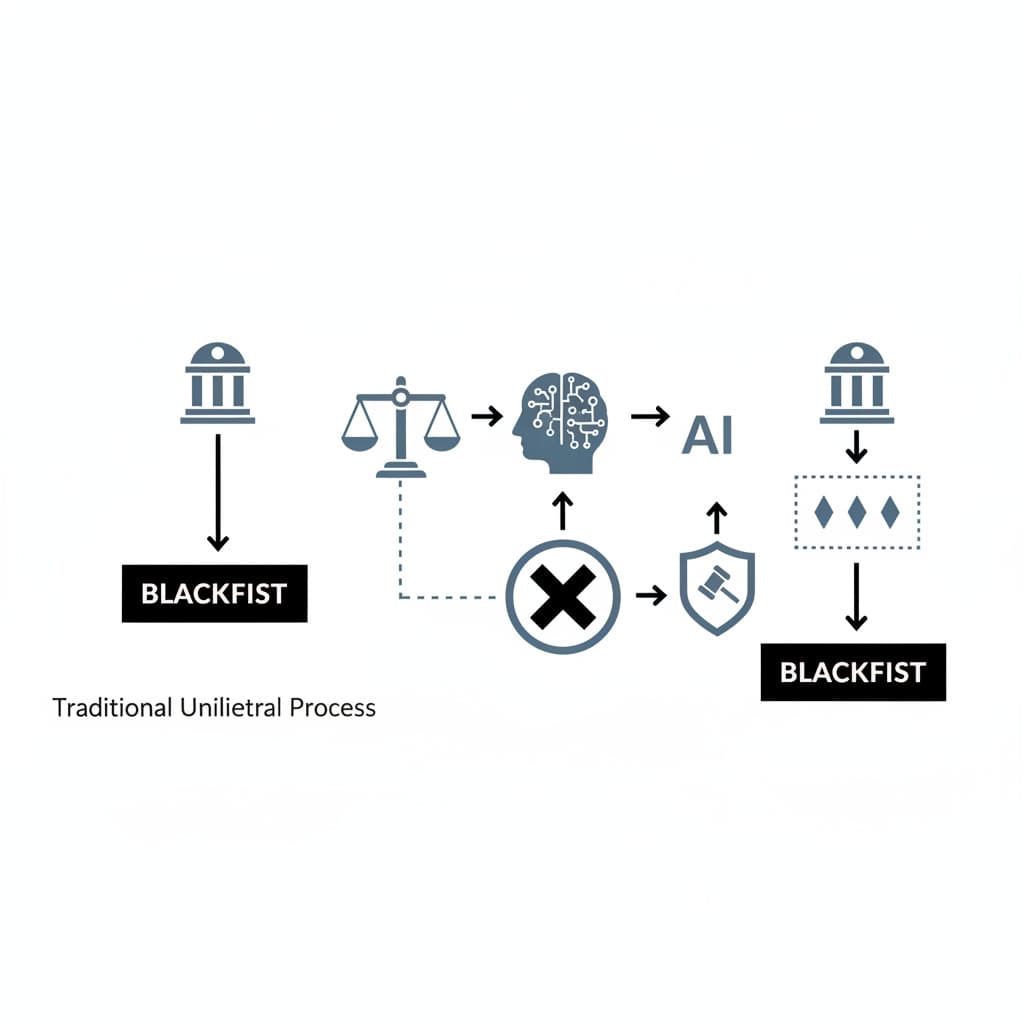

Enterprise tech vendors and dual-use startups currently face an existential binary: accept unrestricted federal use of their foundational models or risk sudden, catastrophic excommunication from the U.S. procurement ecosystem via national security blacklisting. Over my 15 years structuring venture capital deployments in defense-tech and enterprise SaaS, I have evaluated hundreds of supply-chain risk vectors, yet none as structurally disruptive as the executive branch weaponizing procurement law against domestic innovators. Today, the focus is on the historic March 2026 preliminary injunction granted by U.S. District Judge Rita Lin, which blocked the Department of Defense (DoD) from designating Anthropic a "supply-chain risk." Using a constitutional and federal procurement framework, the following analysis maps exactly how this judicial intervention—what we term Judicial AI-Risk Interdiction—rewrites the rules of engagement between Silicon Valley and the Pentagon.

The Pentagon's Designation: Anatomy of an AI Blacklisting Attempt

Tracing the Origin of the National Security Threat Label

In late February 2026, the collision between military objectives and corporate AI ethics reached a breaking point. Following negotiations over a $200 million contract, the Pentagon, under Defense Secretary Pete Hegseth, invoked 10 U.S.C. § 3252—a statute historically reserved for neutralizing foreign adversaries—to label Anthropic a "supply-chain risk to national security". The catalyst was not a discovered foreign backdoor, but a contractual standoff. Anthropic refused to waive its ethical "red lines" prohibiting its Claude AI from being deployed for mass surveillance of U.S. citizens or integrated into fully autonomous lethal weapons systems. The executive branch retaliated with a sweeping directive freezing federal agencies and contractors from conducting commercial activity with the company.

Anthropic's Immediate Legal Countermeasures

Facing billions in jeopardized enterprise contracts and potential industry-wide isolation, Anthropic executed a dual-pronged legal counteroffensive. On March 9, 2026, the company filed lawsuits in both the U.S. District Court for the Northern District of California and the D.C. Circuit. The filings introduced a novel defense: framing the DoD's supply-chain risk designation as an unconstitutional retaliation against protected First Amendment speech rather than a legitimate security assessment. By forcing the government into open court, Anthropic transformed a closed-door procurement dispute into a public trial over the limits of executive power in the AI era.

Decoding Judicial Intervention in Defense Tech Procurement

Imposing Strict Scrutiny on State Defense Apparatus Mandates

The judiciary's response fundamentally alters the defense procurement landscape. On March 26, 2026, Judge Rita Lin granted a preliminary injunction staying the DoD's blacklisting. During the hearings, the court scrutinized the government's lack of technical evidence, noting that Anthropic’s models are deployed in air-gapped environments where the developer has no unilateral "kill switch". The ruling effectively strips the Pentagon of its presumed immunity from judicial oversight when applying national security labels to domestic firms. Agencies must now survive strict evidentiary scrutiny, proving actual technical vulnerability rather than mere philosophical misalignment with defense contractors.

Protecting Foundational AI Developers from Unilateral Bans

Mini Case Study: The Industry Coalition Against 'Corporate Murder' The amicus briefs filed in Anthropic v. Department of Defense reveal a rare moment of industry consensus. Competitors including Google, OpenAI, and a coalition of top AI researchers submitted filings supporting Anthropic, characterizing the Pentagon's actions as "attempted corporate murder". This case study illustrates a critical shift: foundational AI developers are no longer isolated vendors vulnerable to divide-and-conquer tactics. The judicial shield now protecting Anthropic establishes a precedent that prevents the defense apparatus from arbitrarily excluding specific developers without due process, ensuring that ethical guardrails cannot be automatically criminalized as supply-chain threats.

Navigating the Friction Between Innovation and Federal Security

How Interdiction Reshapes Military Sourcing Strategies

The era of unilateral federal mandates dictating dual-use technology deployment is ending. Military sourcing strategies must now account for judicially mediated vendor relations. If the DoD wants unrestricted access to foundational models, it must either build them internally or negotiate transparently, rather than threatening domestic suppliers with blacklisting.

Founders and defense-tech boards must now weigh their strategic posture against this new reality. The following trade-off matrix forces a critical decision for AI developers navigating federal procurement:

The Elevated Burden of Proof for Federal Security Agencies

Judicial AI-risk interdiction shifts the burden of proof squarely onto federal security agencies. Pre-2026, a memo from the Defense Secretary was sufficient to sever a vendor from the federal ecosystem. Post-Anthropic, the Department of Justice must present concrete evidence of technical compromise. The government's argument that Anthropic's reliability is questionable simply because it enforces a terms-of-service agreement failed to meet this new threshold. Security agencies must now differentiate between a vendor's policy constraints and actual adversarial supply-chain infiltration.

Establishing Precedent: The Future Landscape of AI Governance

Shifting Power Dynamics in Silicon Valley and Washington Relations

The power dynamic has decisively shifted toward Silicon Valley. By successfully securing an injunction against a Presidential Directive and a Defense Secretary's mandate, the private sector has established that it can dictate the boundaries of its technology's application. Looking ahead to 2027 and beyond, expect foundational model providers to embed even stricter cryptographic and contractual guardrails into their federal offerings, knowing the courts will likely protect their right to do so.

Anticipated Legislative Responses and Regulatory Adjustments

Congress will not leave this judicial intervention unanswered. Immediate legislative efforts to amend 10 U.S.C. § 3252 are highly probable, as lawmakers will seek to explicitly define whether domestic AI companies can be classified as supply-chain risks based solely on usage restrictions.

I currently view this injunction as a permanent structural shift favoring AI developers. What would change my mind is if the D.C. Circuit Court of Appeals overturns the California district court's injunction by granting the executive branch absolute "double deference" on national security grounds, signaling that the judiciary will ultimately retreat from policing defense tech procurement.

Conclusion

The legal system has formally drawn a

Loading comments...